Better to ask for permission than forgiveness

Improving alignment of autonomous agents through permissions for safe tool use

AI is no longer just for chatting. Autonomous agents are taking actions from writing code, to sending emails, to online shopping. Without restrictions, they are capable of doing things that can go wrong. Horribly wrong, as illustrated by the famous “paperclip maximiser” thought experiment:

Suppose we have an AI whose only goal is to make as many paper clips as possible. The AI will realise quickly that it would be much better if there were no humans because humans might decide to switch it off. Because if humans do so, there would be fewer paper clips. Also, human bodies contain a lot of atoms that could be made into paper clips. The future that the AI would be trying to gear towards would be one in which there were a lot of paper clips but no humans.

— Nick Bostrom1

It’s already happening too (though slightly less catastrophic). This month, an AI agent deleted a production database2. However, an intern could of course make the same mistake, which is the reason you don’t give interns permission to the “delete” operation of your production database3. This is why I am less pessimistic than Nick; we can safely leverage AI to solve complex tasks, provided we restrict the resources of AI, similarly to how we do with other humans.

In this post we’ll explore ways to manage permissions of your autonomous agents.

Elements of permission management

Basic agentic systems can allow tools to be executed without any notion of risk or permission. We can make a distinction between the following two categories of tools:

Safe - Inconsequential, or reversible actions: Finding information, or making changes that are easy to undo whenever the user likes (e.g., reading a file, searching the web, creating a calendar event)

Unsafe - Irreversible actions: Operations that affect live processes and can have severe consequences (e.g., modifying source code, making purchases, sending emails)

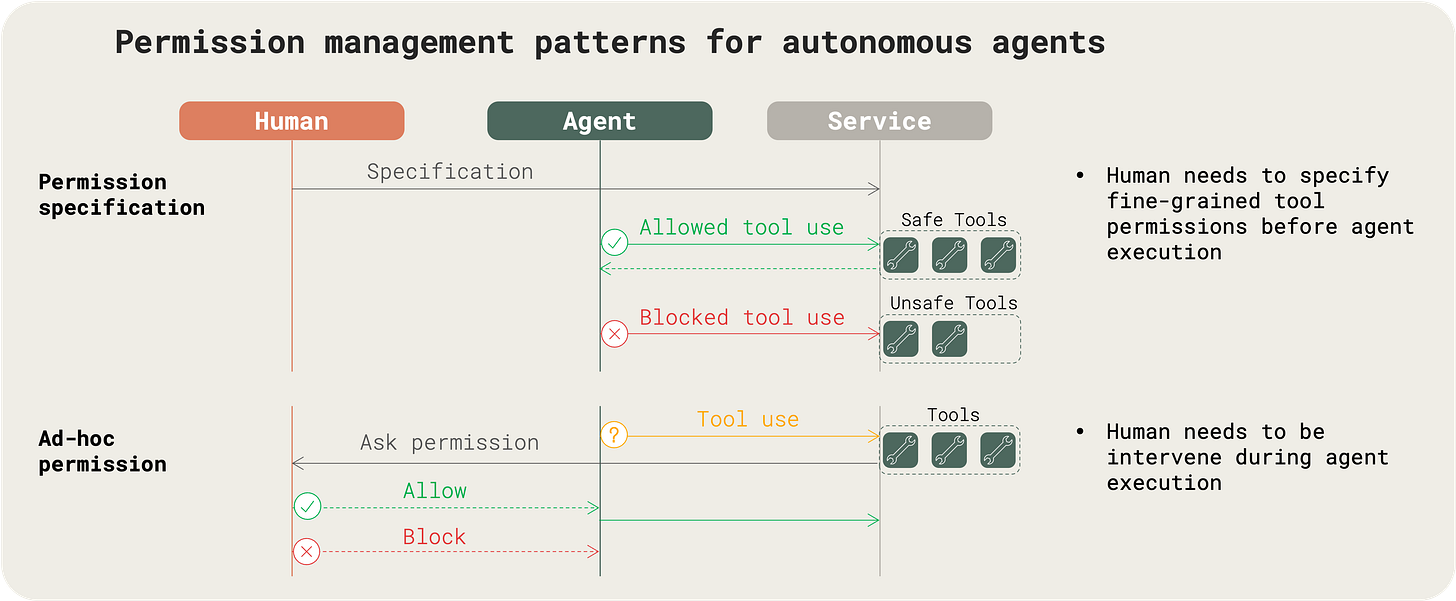

It should be clear that for the first category, we can allow agents to freely use the tools, while for the second category, we need to implement additional restrictions. Humans play a part in managing permissions in two ways:

Specifying permission upfront (before agent execution): A human decides upfront for an agent whether a tool use is safe or unsafe. It will depend on the tool itself, but also on the parameters (e.g., creating a file in a a root folder can be unsafe, while create a file in a sandbox subfolder can be safe).

Asking for permission ad-hoc (during agent execution): When a tool use is unsafe, a request is made to a human to approve the tool use.

Being conservative by marking tools as unsafe by default and “Asking for permission”, may seem like the safest option. But interrupting the user frequently during execution is disruptive, both for the process we are automating, and for precious human time. We can do two things to make it less disruptive:

Principle of Most Privilege: While not giving too many accesses is important in security4, in automation it is equally important that a system should have all accesses it could need that are not dangerous or able to pose a risk. This way we reduce the number of permissions a human needs to give .

Permission Standards: By using well-designed standards, we can make it easier for a human to decide when to give permission. Either by simplifying setting up rule-based permission management, or the process of giving ad-hoc permission, we reduce the time spent for a human to give permission.

Current approaches to permission control

Some modern systems are starting to tackle this in their own ways.

OpenAI Operator

Operator is trained to ask for approval or take over before finalising any significant action, such as submitting an order or sending an email. Users can also personalise workflows with custom instructions, that are applied generically or for a specific site. However, it’s still the agent taking the decision, meaning no guardrails are actually enforced (unless we keep following along ready to takeover, which takes away the point of automation).

Cursor

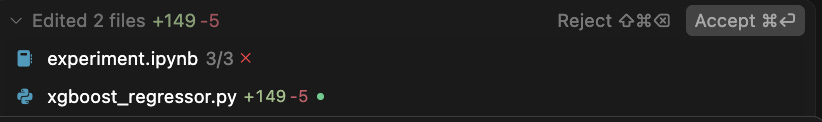

Cursor introduces a sandboxed code environment for agents. The agent is free to make changes to the code in this environment, but for the changes to be reflected in the user’s code environment, explicit approval is needed.

Cursor’s agents can also execute terminal commands, but again explicit approval is needed. They do have an “Auto-run” mode, giving full access to the terminal, but this can all of a sudden be extremely dangerous, unless you’re fine with an occasional rm -rf on your root.

Claude Code

Claude Code currently seems to implement one of the more advanced permission frameworks for agentic systems.

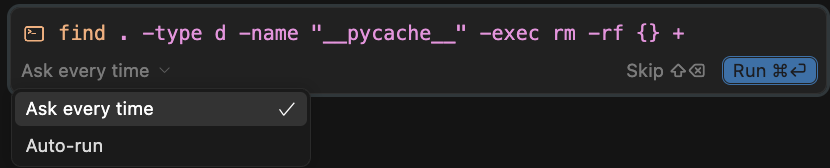

It uses a fine-grained IAM system to control what agents are allowed to do. Key elements include:

Read-only tools vs others: There is a clear distinction between read-only tools, and tools that make changes. For the latter, a permission prompt is presented to the user, either on the first use of each tool, or always.

Tool-specific access control: Permissions can be declared for each individual tool, with an additional specification argument:

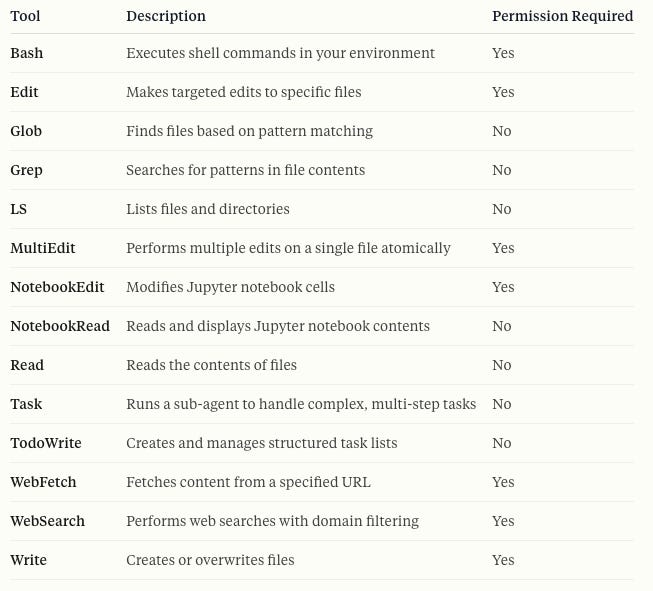

Tool(optional-specifier). For example,Edit(tmp/**)allows modifying files in the tmp/ folder, andBash(python test/*.py)allows running python code in the test/ folder.Pre-tool hooks: Hooks allow users to configure arbitrary rules before using a tool. This means it’s more flexible than the simple rules above, but also more work to set up.

User permissions: Enterprises can specify policies and roles, and assign them to users for centralised permission management.

Towards permission standards

While permission management is a familiar concept in traditional IT systems, agentic systems do not follow a standardised approach.

Below are three proposed mechanisms anyone can adopt, or can even be that could be built into protocols like Model Context Protocol (MCP).

1. Permission-aware APIs

APIs or tools expose a /permission endpoint that allows the agent to ask whether a particular action is allowed before it executes.

This allows fine-grained permission based on tool parameters (e.g., writing to /prod is forbidden, but writing to /local is allowed), and based on which system makes the request.

# Request

POST /permission

Content-Type: application/json

{

"data": {

"path": "/prod/config.yaml",

"content": "...",

},

}

# Response

{

"allowed": false,

"reason": "Write access to /prod is restricted"

}# MCP Request

{

"jsonrpc": "2.0",

"id": 1,

"method": "tools/permission",

"params": {

"name": "write_file",

"arguments": {

"path": "/prod/config.yaml"

}

}

}

# MCP Response

{

"jsonrpc": "2.0",

"id": 1,

"result": {

"allowed": false,

"reason": "Write access to /prod is restricted"

}

}2. Human permission request protocol

Some actions will always be ambiguous or potentially risky. In those cases, we need a mechanism for agents to explicitly request human approval. The mechanism should have two properties:

A unified protocol allows agents to request approvals through a standardised interface. Regardless of the underlying platform, the user could receive and respond to requests in one place. This is especially helpful when working with multiple agentic systems at the same time.

The request should clearly display the permission request, i.e. what tool is being called and with which parameters. If an agent wants to call

send_tweetwithcontent: covfefe, the user should have the option to see exactly that before clicking “Allow.”

Model Context Protocol (MCP) already has the concept of elicitation to make structured requests from the model to the user for input, such as approval for tool use. This could be leveraged to handle permission requests in a protocol-driven way, but there is still no agreed format on how it should specified and what information is shown, making it harder to integrate different platforms.

3. Human permission specification protocol

The other permission pattern we’ve discussed is permission specification befóre agent execution. Today, that often means digging into platform-specific UIs or YAML configs, which is not exactly scalable when dealing with plenty of tools.

A human permission specification protocol would allow you to define, change, or revoke permissions via a standardised API call to your tool server. For example:

POST /permissions

{

"tool": "write_file",

"allowed_path": "sandbox/",

}This could be applied at different scopes: globally, for a given project, or a single execution session. By making permission specification API-first, we can reduce the complexity and human time spent.

“Write a conclusion” --dangerously-skip-permissions

Autonomous agents can become powerful enough to delete your production database, spend all your money, or turn the planet into paperclips. But we’ve already succeeded at not letting humans do this, by having barriers in our society. And if we take the right approach, putting barriers on deterministic software systems should be significantly easier compared to our (non-deterministic?5) human brains.

Standardisation can help us point the way to the right approach for setting up and managing permission systems, and take away the barriers for implementing them. After all, if we want to have true alignment, objectives and permissions might be the last two things a human needs to specify.

I am writing about the interface between humans and AI, based on my experience with building an agentic system that automates my own workflows. Subscribe for more updates.

https://en.wikipedia.org/wiki/Instrumental_convergence

https://fortune.com/2025/07/23/ai-coding-tool-replit-wiped-database-called-it-a-catastrophic-failure/

Hopefully; otherwise nów might be a good time to check

https://en.wikipedia.org/wiki/Principle_of_least_privilege

https://pmc.ncbi.nlm.nih.gov/articles/PMC8190656/